After a bit of a journey to get to SuccessConnect, I have to say my conference has been pretty great. (Couple of highlights in the pics above, seeing a cool AI use case and chatting to Amy and Aaron.)

I promised Jon Reed that I’d do a recap blog post on how what I’d seen about SuccessConnect that matched with, underwhelmed or exceeded my expectation – as I’d laid out in my prior post. So here goes!

AI… was there any mention of AI, OMG, you couldn’t stop hearing about it. The keynote was AI, every sessions had a reference to AI, current or future plans, it was everywhere except the Tuesday night party. Meg Bear did make a good point – expecting to not hear about AI at a tech conference would be more remarkable.

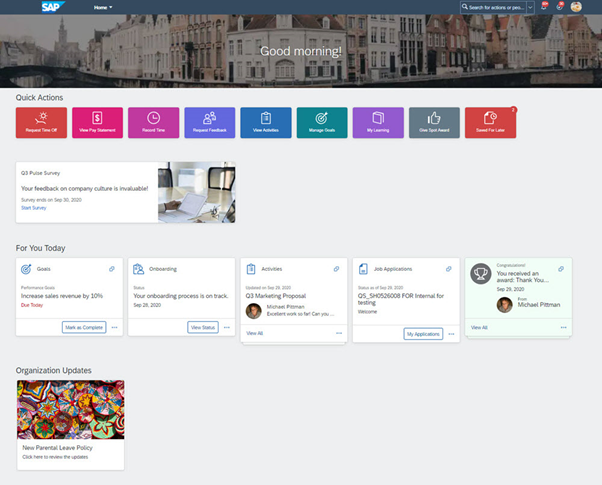

However, I did see a demo of using generative AI to put together a performance goal that really did wow me. (first photo in this post) A simple use case, but it took my prompt for a goal of “create an SAP BTP SuccessFactors extension application” and turned it into a SMART goal, added a bunch of very relevant steps, and SAP will be happy, even suggested I add it to the SAP Store. All with a set of reasonable date and details for each step. I was impressed, there are very few people I know who could have come up with a better representation of that idea as a performance goal.

My only worry, now, is that creating goals is going to be far to easy and fun for my team if they were to use this (we use SAP SuccessFactors internally at Discovery) and I’m going to end up reviewing a stupid number of goals, but at least they would all be SMART ones! But to that point, my next thoughts were around how is this going to be paid for, because it can’t be “free”! Generative AI costs money to run. This took a little more digging into, but I was happy to find an answer that made sense to me.

SAP, after realising that building their own LLM tooling was silly and that others do it better, have chosen not to only go with the one LLM/AI/ML platform but instead are using multiple. The SAP-wide chat agent “Joule” being powered by IBM Watson, the goal setting piece that wow’d me being powered by Microsoft. In the end the customer shouldn’t really care which underlying engine does the processing, as long as it doesn’t leak personal or company data (and I’m pretty sure that SAP are making sure that doesn’t happen.) But on the other-hand, customers shouldn’t have to purchase subscriptions for each piece of AI logic they use. And this, is what I’m told will happen – a single SAP-wide subscription to AI services that can be used on perhaps a pay-as-you-use basis – that bit apparently not 100% sorted yet – but the single subscription model sounds great.

I had some great conversations with a few people about the potential for vendor lock-in coming from AI – or at the very least the need to provide much more historic data in any system migration to “train” your AIs on your company’s data (with the associated up tick in migration costs meaning that there will need to be some really compelling reasons to migrate.)

This will be more relevant for some areas than others. Whilst Holger assured me that technically import/export of trained models will be possible, I’m not seeing that will happen. No vendor is going to let you bring your AI training with you when you leave the platform, you’re going to have to re-train, and that is going to mean migrating LOTS of data. There may be scope for data load that isn’t “in-system” just for training of AIs – think this is a new problem that hasn’t happened yet, but there may some benefits for first movers in this space – let’s see what happens.

Opportunity Marketplace – Yeah, was mentioned quite a lot, references to is from pretty much all tracks apart from payroll. I should have made that one a drinking game. But customer stories on how it had benefited them, I didn’t hear too many of. The one customer I was hoping to hear from had pulled back from implementing due to desire to reset all the Talent Intelligence Hub links and skills and competency model in their organisation. So I’m still looking for those stories that can help me justify the implementation to a new customer. I still think it’s a great tool and I like it, but just because I like it doesn’t mean it solves a problem for a customer well enough that they will buy it. I think the problem here might be with so much new cool stuff happening in Talent Intelligence Hub, customers are struggling to figure out how to get their houses in order to benefit. They all know they will benefit, but deciding on which skills your organisation uses, that’s not as easy a task as you might think it could be!

Recruitment – well I can tell you they heard LOUD and clear the feedback about removing the drag and drop in the new recruiter experience. The new experience is here and aligns with Horizon theming in rest of platform. Some cool use cases for AI in allowing detection of skills in candidate CVs and allowing comparison of candidates by skills alignment with role. Think we will be entering into the new war of AIs – with AIs generating candidate CVs to make them as attractive as possible to the AI that are pulling the details out of them. This is going to be a space to watch.

Learning – No surprises here, the new experience looks great, lots of discussion about how this will work with new skills and competencies. I think biggest thing to come here (apart from the new experience) is the offline mobile experience for Android. That will make a difference for many, especially the “deskless” workers.

Talent Intelligence Hub – again, nothing unexpected. Great news is that it seems the SAP teams responsible for the product are as genuinely excited about handling the difference between employment level info and person level info – looks like we are aligned on this one. Now just looking to see how to ensure it happens. i hope we see some significant customer adoption of this solution as pretty much ever new non-payroll enhancement to the suite appears to be based off it.

Concurrent Employment/Temporary Assignments/Higher Duties – Okay, it wasn’t as hidden as I thought it might be, but it certainly didn’t get the exposure it deserves. Great thing is I met with Farah Gonzalez who is the VP, Global Product Management for Public Sector. Kicking myself for not having taken a photo of the two of us, but here’s her LI profile shot – given the effort gone to here, seems a shame not to share!

Anyway, I have found someone to share all my hopes, dreams, expectations and disappointments for this area of the product and we’re setting up a meeting to discuss, I’m very much looking forward to that (once I get over the jetlag and backlog of customer stuff from being at #SuccessConnect.) I still think these solution are very relevant for organisations that aren’t public sector and very much play into the #FutureOfWork type discussions. But making connections with people like Farah is exactly why going to SuccessConnect in person is so valuable for me (and my customers!)

Payroll – Well, this is interesting. Next Gen Payroll has a new name – SAP SuccessFactors Payroll and will be in early adopter (as in productive usage!) for some customers implementing in UK this year for hopefully go lives next year. US version to follow, with Germany, Canada and Australia, to follow some undetermined time later. Likely target market for near future will be smaller organisation with payrolls of less than 1000 emps, so not really your legacy SAP payroll or ECP customers yet. Hoping that means my company might be a possibility when Australian push happens, we have both S/4HANA (public cloud) and SAPSF… ( hint, hint any SAP people reading this!) A LOT of discussion around customers needing more certainty on timelines and SAP not being able to give this, which I found a little frustrating (not that SAP couldn’t communicate a timeline, but that we spent so long discussing it. but it is worthwhile that SAP knows how frustrating that is. So I guess I can handle a little frustration for the global good.)

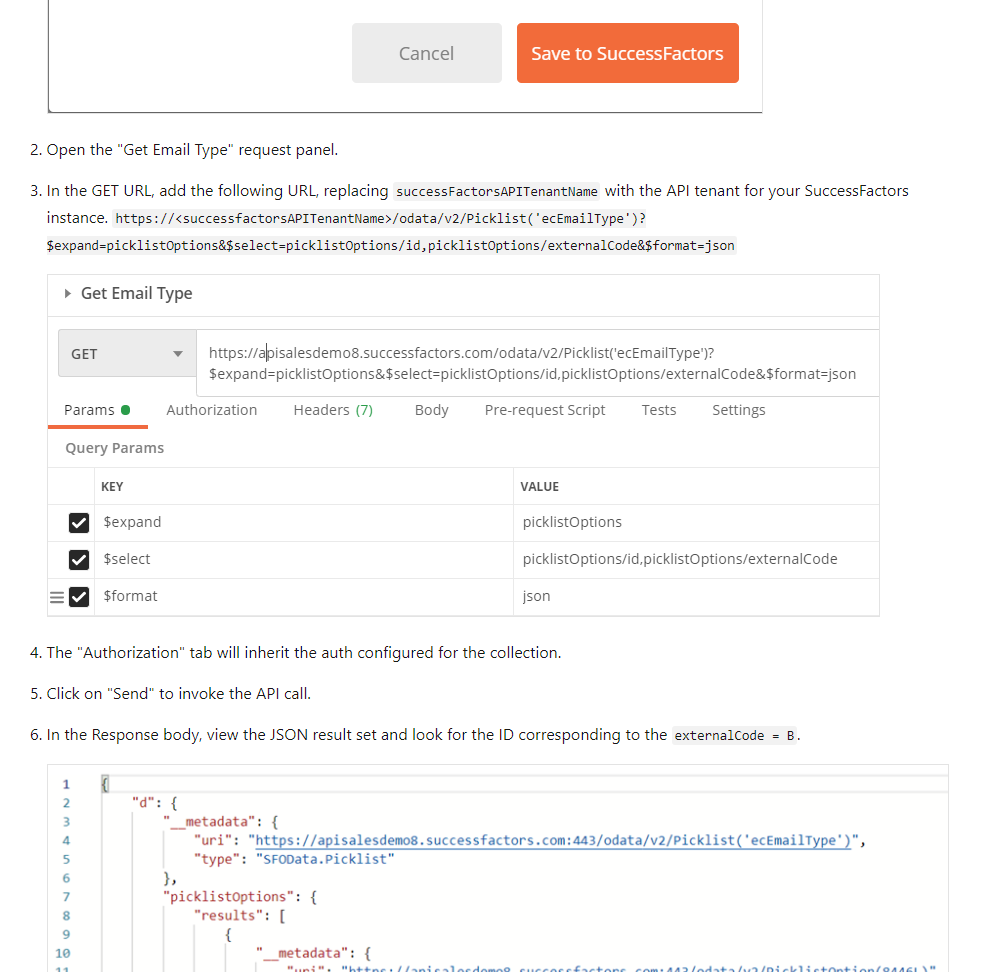

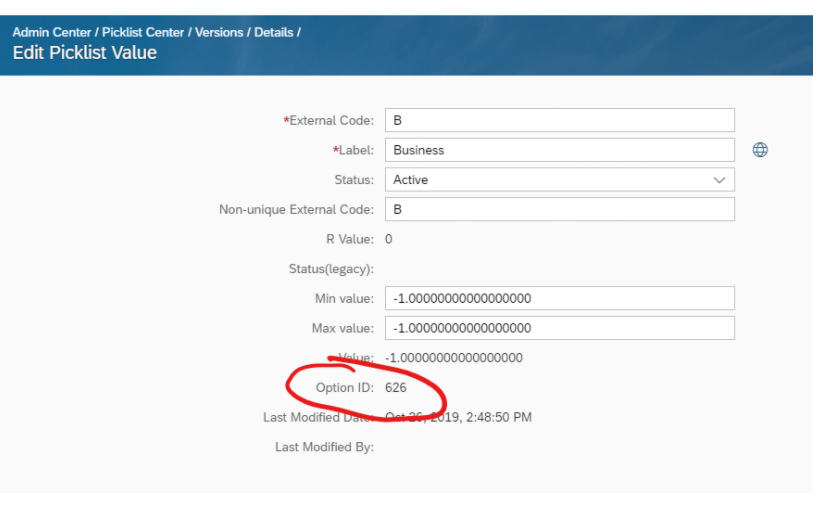

APIs/Third Party Cookies deprecation handling/Audit logging – really enjoyed a couple of sessions around the more “techy” areas of SAP SuccessFactors. There is probably enough there that I could fill an entire post by itself – so I probably will. But highlights/call outs:

- The poor guy running the main tech roadmap session hadn’t been pre-briefed on who I was (evil grin) and probably let me ask too many questions,

- Had multiple customers come up to me afterwards and thank me for asking those same questions!

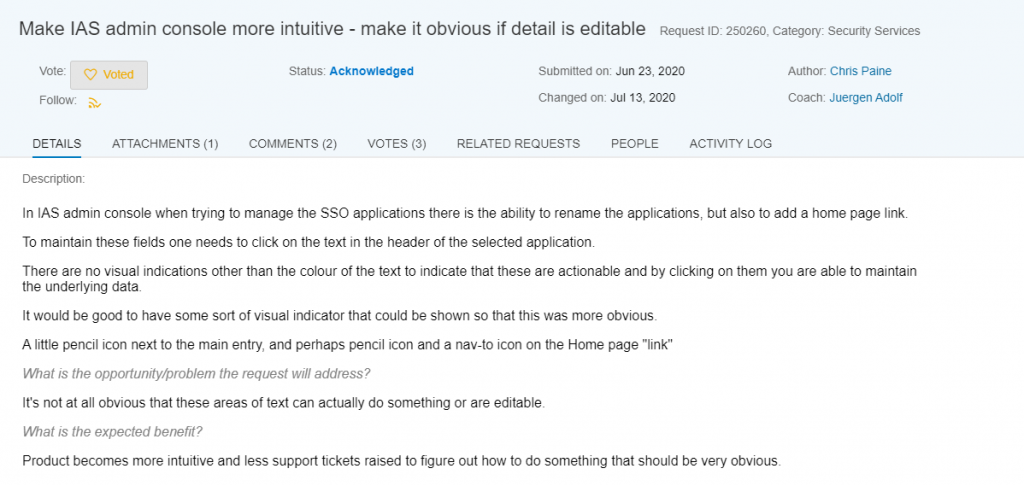

- Very surprised that SAP didn’t use the conference as an opportunity to pre-brief customers on upcoming URL changes to ALL SAPSF instances and IAS/IPS instances.

- We’re moving away from OData! seems that SAP isn’t pushing CAP into it’s own products, because SAPSF going to move to a REST based API and away from OData. The things I heard about streaming/websocket APIs are apparently not going to happen (boooooo), But good news is that new framework for API development should be MUCH simpler for SAP to implement internally, so getting an external API to new functionality, should be much faster and simpler. Think there will be a lot to play out in this space.

- Finally Audit and access reporting functionality coming back. Audit logging will be using the SAP BTP Audit logging functionality – thus ensuring compliance with all those tricksy global compliance legislations. Access log reports will also be available shortly (if not this coming release – I honestly can’t remember, but will be checking release notes and the slide decks when I get them.

Overall

Thanks to the amazing Liliana Zolt who helped make the whole experience so smooth over the three days of the conference, huge appreciation. Was wonderful to catch up with the undeniable brains-trust of the SAP SuccessFactors consulting world, all the interactions with everyone were fantastic and the people we spoke to on the SAP side were all amazing and so willing to make the time to chat to us. Was amazing to be there. Really looking forward to next year in Lisbon (now just to figure out how to convince the company to pay for an even longer flight!)

- *Updated to note SAP SuccessFactors Payroll expected to be live for some limited initial customers *next* year, not this year, just implementation this year! Still very exciting though!